Gemma 4 is Google DeepMind’s latest open-weight AI model family, built to deliver near-frontier capabilities with efficient parameter sizes, strong multimodal reasoning, and long context windows, all under a permissive Apache 2.0 license for real-world use.

It stands out by supporting both edge/on-device deployment and high-performance workstation workloads, allowing developers to run advanced AI models locally instead of relying solely on proprietary cloud APIs.

What makes Gemma 4 different is how it combines Gemini‑class research with ultra‑efficient parameter counts, agentic workflows, and edge‑to‑workstation deployment, so developers can run serious AI locally instead of depending entirely on the cloud.

What Is Gemma 4?

Gemma 4 is Google DeepMind’s latest open‑weight model family, built from the same research and technology as the Gemini 3 frontier models but released for developers to run directly on their own devices. It supports text, images and (on some variants) audio as input, and is positioned as Google’s “most intelligent open models to date.”

Key official overviews you should link to:

- Gemma 4 – Google DeepMind models page

- Gemma 4 – Google AI for Developers overview

- Gemma 4 Google Blog announcement

From a developer‑SEO angle, it’s natural to anchor your main keyword like this: “With Gemma 4, Google is pushing open‑source LLMs closer to proprietary frontier models while keeping them runnable on consumer‑grade GPUs.”

Gemma 4 Model Family and Sizes

Unlike a single monolithic model, Gemma is a small family of open‑weight variants designed to cover phones, edge devices, workstations, and cloud GPUs.

According to Google’s docs and launch write‑ups, the Gemma 4 lineup includes:

- E2B (Effective 2B) – edge / on‑device

- E4B (Effective 4B) – stronger edge and mobile

- 26B Mixture‑of‑Experts (MoE) – workstation / high‑end GPU

- 31B Dense – flagship open model

The official model overview confirms that Gemma comes in four parameter sizes (E2B, E4B, 26B, 31B) and that all of them can run at 16‑bit or in lower‑precision quantised formats for lighter hardware. TNW summarises this as “four open‑weight models from E2B edge to 31B dense,” showing how the family spans everything from Raspberry Pi‑class boards to 80GB H100s.

Helpful links for this section:

- Gemma 4 model overview | Google AI for Developers

- Constellation Research – Google launches Gemma 4 open‑source LLM family

- TNW – Google launches Gemma 4, four open‑weight models

You can write, for example: “The Gemma 4 family spans from a tiny 2B edge model to a 31B dense model that ranks among the top open LLMs on Arena AI, giving teams options from mobile apps to workstation inference.”

Core Capabilities: Multimodal, Long‑Context, Agentic

What really differentiates Gemma is not just size, but the capabilities Google has pushed into its open weights.

From Google AI docs and the Gemma 4 model card:

- Multimodal: text, images, and (on some variants) audio input, with text output.

- Long context: up to 128K on small models and 256K tokens on the larger ones.

- 140+ languages: broad multilingual coverage.

- Built‑in function calling / tool use and system prompts for structured agents.

The docs summarise the top‑level positioning as: “Gemma: solve a wide variety of generative AI tasks with text, audio and image input, support for over 140 languages, and long 128K and up to 256K context window.” The dedicated model card confirms open weights, instruction‑tuned variants, multimodal inputs, and the 256K context window in more detail.

External resources worth linking:

A natural sentence using your keyword: “Thanks to its 256K‑token context and multimodal inputs, Gemma 4 can index entire codebases or long legal documents in a single window, something many earlier open models struggled with.”

Intelligence‑per‑Parameter and Performance

One of the big talking points around Gemma is “intelligence‑per‑parameter”: how much capability you get for each parameter you run.

Google’s launch blog highlights that the 31B dense model is ranked around #3 on the Arena AI open‑model text leaderboard, and the 26B MoE is around #6, outperforming “models 20× its size” on standard benchmarks. Constellation Research echoes that the 26B and 31B models “provide more intelligence per parameter” and deliver frontier‑level capabilities with significantly less hardware overhead. The Deep View notes that Gemma 4 “represents a massive leap from its predecessor and outperforms bigger models” while staying small enough to fine‑tune on consumer GPUs.

Useful background and comparison links:

- Google Blog – Gemma 4: Our most capable open models to date

- The Deep View – Google rethinks the AI model race with Gemma 4

- Constellation Research – Gemma 4 open‑source LLM family

You can contrast it with older Gemma or GPT‑class models by referencing existing comparisons like: Gemma AI vs GPT‑4: Which Language Model Is Better?

Edge and On‑Device Focus

Another big differentiator: Gemma is explicitly designed for edge and on‑device use.

Google and several developer advocates emphasise:

- E2B and E4B are “Edge Models” that run on phones, Raspberry Pi, Jetson Nano, and similar low‑power hardware.

- They are also the foundation for Gemini Nano 4, Google’s next‑gen on‑device model for Android phones.

- Workstation models (26B MoE and 31B) are optimised for consumer GPUs; quantised, they can run locally on high‑end desktops without requiring cloud calls.

TNW reports that the E2B and E4B models come from work with Pixel, Qualcomm and MediaTek, and are intended to run on‑device, while the 26B and 31B are aimed at offline use on developer hardware and consumer GPUs. A developer‑focused LinkedIn breakdown explains that the 26B MoE and 31B dense models are “Workstation Models” with 256K context, meant for IDE integrations, local coding assistants and secure local AI servers.

Good links for this topic:

- Introducing Gemma 4 – Google AI devs (LinkedIn)

- Addy Osmani’s Gemma 4 workstation/edge breakdown

- How to build on‑device AI with Gemma 4 (YouTube)

A natural way to use your keyword: “For teams that want low‑latency, private inference, the edge‑ready Gemma 4 variants can run on mobile or embedded devices without sending data to the cloud.”

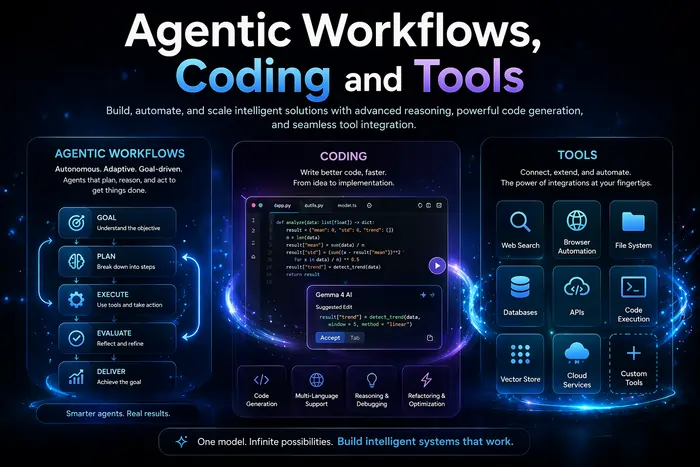

Agentic Workflows, Coding and Tools

Gemma is also built “for the agentic era,” meaning it’s designed to run tool‑using, multi‑step agents rather than just respond to single prompts.

From the dev and product posts:

- Native tool use / function calling: you can wire Gemma directly into tools, APIs and databases.

- Multi‑step planning and complex logic: support for more sophisticated chains and autonomous agents.

- Enhanced coding: strong performance on coding benchmarks and code‑assistant use cases (IDE plugins, local copilots, offline code review).

- Native system prompt support: built‑in role separation for more controlled, structured conversations.

Google AI docs explicitly highlight “enhanced coding & agentic capabilities” and native system prompt support as key upgrades in Gemma 4 compared with earlier releases. Addy Osmani’s breakdown calls out Gemma as “built for action, agents, and multimodality,” with local vision/audio and tool use baked in.

Supporting links:

- Gemma 4 model overview – coding & agentic section

- The Deep View – agentic workflows in Gemma 4

- HitPaw – Google Gemma 4: Features & Comparisons

Example sentence: “If you want to build local‑first agents that can plan, call tools, and refactor code offline, Gemma 4 offers built‑in function calling and extended context tailored to those agentic workflows.”

Licensing and Ecosystem (Apache‑2.0)

A major shift with Gemma is licensing and distribution.

Articles and the official docs note:

- Gemma weights are released under the Apache 2.0 license, which is more permissive than earlier Gemma terms.

- Models are available through Google AI Studio, AI Edge Gallery, and popular third‑party ecosystems like Hugging Face, Kaggle, Ollama, Nvidia NIM/NeMo and Docker images.

- This makes it easier to integrate Gemma 4 into commercial products, fine‑tune it, and redistribute within your own apps, compared with more restrictive licenses.

TNW calls the Apache 2.0 license “a significant shift from previous Gemma releases.” Constellation Research emphasises that Gemma 4 is accessible via both Google tooling and external platforms for rapid adoption. Google’s own launch post mentions deployment via AI Studio, AI Edge Gallery, Hugging Face, Nvidia NIM, NeMo and others.

Relevant links:

- Gemma 4 model card – license section

- Google Blog – Gemma 4 launch post

- TNW – Apache 2.0 details for Gemma 4

You can phrase it like: “By releasing Gemma 4 under Apache 2.0, Google has made its most capable open models viable for serious commercial products, not just research experiments.”

Practical Use Cases for Gemma 4

Pulling all of this together, where does Gemma4 shine in practice?

From Google’s docs and independent write‑ups, standout use cases include:

- Local coding assistants and IDE copilots (workstation models).

- On‑device chatbots, summarisation and translation on Android and edge devices (E2B/E4B).

- Multimodal agents that can read images or screenshots and then call tools and APIs.

- Knowledge workers analysing long documents, contracts or logs in a single context window.

- Privacy‑sensitive scenarios where data cannot leave an on‑prem or air‑gapped environment.

HitPaw’s guide notes that Gemma4 is particularly comfortable handling everyday writing, brainstorming and creative tasks while still offering stronger reasoning and longer memory than earlier Gemma releases. The Deep View stresses that Gemma4 repositions Google in the “open vs closed model race” by making serious local‑first AI more accessible.

External resources to link for use‑case examples:

- Google DeepMind – Gemma 4 product page

- HitPaw – Google Gemma 4: Features & Comparisons

- The Deep View – Google rethinks the AI model race with Gemma 4

Conclusion

Gemma 4 stands out because it compresses a lot of frontier‑style capability into open, Apache‑licensed models that can actually run on real‑world hardware, from Android phones to single‑GPU workstations. With multimodal support, 128K–256K context windows, strong coding and agentic features, and a focus on “intelligence‑per‑parameter,” Gemma4 is positioned as a serious option for developers and organisations that want local‑first AI without giving up too much performance compared with proprietary giants.

If you also care about human performance around these tools, a complementary read is this piece on sleep and long‑term health: Australia’s Sleep Crisis Reveals Key Insights for Better Health, which looks at how rest patterns shape our ability to think, create and work with advanced systems.

FAQs About Gemma 4

What is Gemma 4?

Gemma 4 is an open-weight large language model family from Google DeepMind, offering text and multimodal capabilities, long context windows, and near-frontier performance.

Who created Gemma?

It was developed by Google DeepMind alongside Google’s AI teams, building on the Gemini research stack.

How is Gemma 4 different from earlier versions?

It introduces stronger reasoning, longer context, better coding ability, and improved agentic behavior, with optimisation for edge and on-device use.

What parameter sizes are available?

The family includes small edge models and larger workstation-scale models with tens of billions of parameters.

Is Gemma multimodal?

Yes—Gemma supports text and image input, with some versions also handling audio, enabling multimodal workflows.

How long is the context window?

Depending on the version, it supports tens of thousands to 100K+ tokens, ideal for long documents and complex tasks.

What license does Gemma use?

It uses a permissive Apache-style license, allowing commercial use, fine-tuning, and redistribution.

Can Gemma run on-device?

Yes—smaller models are optimised for mobile and edge devices, making local AI deployment possible.

What are common use cases?

Use cases include coding assistants, chatbots, document analysis, RAG systems, and multimodal AI agents.

How does it compare to GPT-4-class models?

Gemma 4 aims for competitive performance with fewer parameters and the advantage of being open-weight.

Is Gemma good for coding?

Yes—it supports code generation, debugging, and refactoring, especially in developer tools and IDEs.

Does it support agents and tool use?

Yes—it is designed for agentic workflows, including API/tool calling and multi-step reasoning tasks.

How can developers access it?

Developers can download model weights, use model hubs, or run it via SDKs, containers, and hosted environments.

Can I fine-tune Gemma?

Yes—the open-weight nature allows custom fine-tuning on private datasets for specialised applications.

Who should use Gemma?

It’s ideal for teams needing flexible, high-performance AI, especially those wanting local deployment, cost control, and open licensing freedom.